Doom3 BFG Source Code Review: Multi-threading (Part 2 of 4) >>

Doom III engine was written from 2000 to 2004, at a time where most PC had only one CPU. Even though idTech4 architecture and design accounted for SMP machines, it ended up being a mono-threaded game at last minute (see my Q&A with John Carmack). Since then, things have changed a lot. This is well summarized by Microsoft article "Coding For Multiple Cores" :

Doom III engine was written from 2000 to 2004, at a time where most PC had only one CPU. Even though idTech4 architecture and design accounted for SMP machines, it ended up being a mono-threaded game at last minute (see my Q&A with John Carmack). Since then, things have changed a lot. This is well summarized by Microsoft article "Coding For Multiple Cores" :

For years the performance of processors has increased steadily, and games and other programs have reaped the benefits of this increasing power without having to do anything special. The rules have changed. The performance of single processor cores is now increasing very slowly, if at all. However, the computing power available in a typical computer or console continues to grow. The difference is that most of this performance gain now comes from having multiple processor cores in a single machine, often in a single chip. The increases in available processing power are just as dramatic as in the past, but now developers have to write multi-threaded code in order to use this power.

The target platforms of Doom III BFG all feature multiple cores:

- Xbox 360 hardware features one triple-core Xenon CPU. With simultaneous multi-threading the platform accounts for 6 logical cores.

- PS3 hardware features one PPE and six SPEs.

- PC hardware commonly features quad-core. With hyper-threading those platforms account for 8 logical cores.

As a result, idTech4 has been boosted not only with multiple threads but also with idTech5 "Job Processing System" which harness multi-core machines.

Trivia : The Xbox One and PS4 hardware specs have been recently announced: Both will feature eight cores. An other strong indicator that in order to be relevant any game developer will have to be good at multi-threading programming.

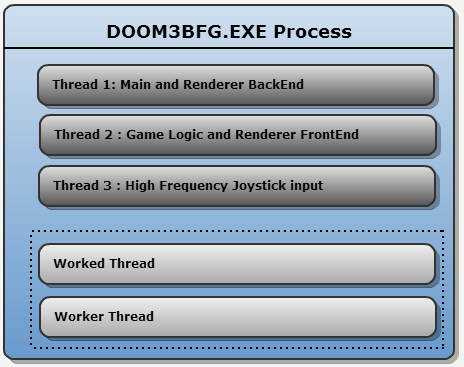

Doom 3 BFG Threading Model

Doom3 BFG is heavily multi-threaded. On PC the game starts with three threads:

Doom3 BFG is heavily multi-threaded. On PC the game starts with three threads:

- Thread dedicated to the Renderer Backend (Sends draw commands to GPU).

- Thread dedicated to Game Logic and Renderer Frontend (Generates draw commands).

- Thread dedicated to High frequency (250Hz) joystick sampling.

Additionally, idTech4 spawns two Worker Threads. They are here to assist any of the three Sovereign threads. They are managed by an in-house Scheduler when possible.

Core idea

id Software solution to the multi-core challenge was made public in the 2009 presentation "Beyond Programming Shaders". The two core concepts are:

- Decompose processing task as "jobs" that are performed by "workers" threads.

- Avoid delegating synchronization to the operating system: Do it yourself with atomic operations.

Building blocks

The system relies on three items:

- Jobs

- Workers

- Synchronization

A "Job" is exactly what one would expect:

struct job_t {

void (* function )(void *); // Job instructions

void * data; // Job parameters

int executed; // Job end marker...Not used.

};

Note : According to the comments in the code, "A job should be at least a couple of 1000 clock cycles in

order to outweigh any job switching overhead. On the other

hand a job should consume no more than a couple of

100,000 clock cycles to maintain a good load balance over

multiple processing units."

A "Worker" is a thread that will remain idle waiting for a signal. When it is awoken it tries to find jobs. The workers try to avoid synchronization by

using atomic operations while trying to fetch a job from a JobList.

"Synchronization" is performed via three primitives: Signals, Mutexes and Atomic operations. The latter are favored

since they allow the engine to retain CPU focus. Those three mechanisms implementation are detailed at the bottom of this page.

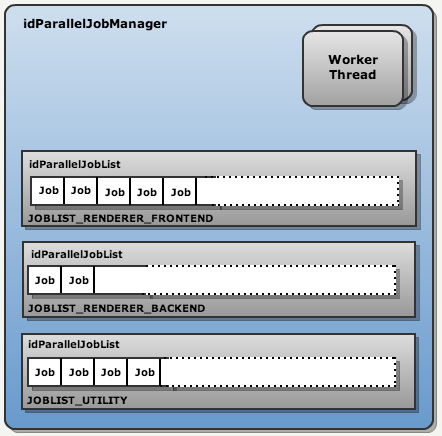

Architecture

The brain of that subSystem is the

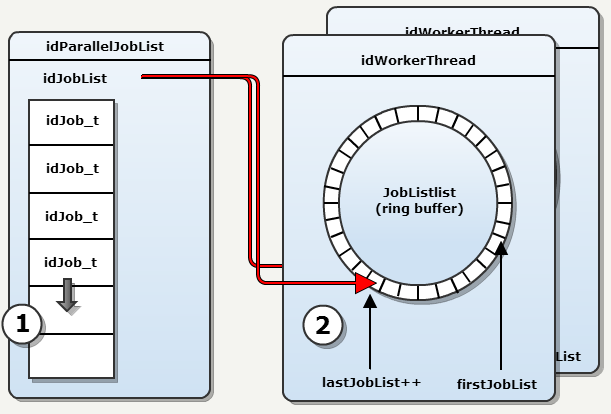

The brain of that subSystem is the ParalleleJobManager. It is responsible for spawning the workers threads and creating queues where Jobs are stored.

That is the first way synchronization is avoided: Divide the engine job posting system into multiple sections that are

accessed by one thread only and therefore require no synchronization. In the engine, queues are called idParallelJobList

In Doom III BFG only three sections are present:

On PC, two Worker Threads are created at startup but

probably more are created on XBox360 and PS3.

According to the 2009 presentation, idTech5 features many more sections:

- Collision detection

- Animation blend

- Obstacle avoidance

- Virtual texturing

- Transparency processing (foliage, particles)

- Cloth simulation

- Water surface simulation

- Detail model generation (rocks, pebbles etc.)

Note : The powerpoint also mentions the concept of one frame latency but this portion of the code is not in Doom III BFG.

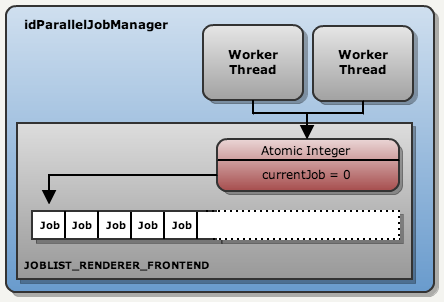

Jobs consumption :

Jobs consumption :

Workers run continuously and try to "find a job". This process requires no mutexes or monitors: An atomically incremented integer

distribute jobs with no overlaps.

Usage

Since jobs are segregated into sections accessed by only one thread, there is no synchronization required for adding a job. However, submitting a job to the worker system does involve a mutex. Here is a example where the renderer tries to find which lights are generating interactions :

//tr.frontEndJobList is a idParallelJobList object.

for ( viewLight_t * vLight = tr.viewDef->viewLights; vLight != NULL; vLight = vLight->next )

{

tr.frontEndJobList->AddJob( (jobRun_t)R_AddSingleLight, vLight );

}

tr.frontEndJobList->Submit();

tr.frontEndJobList->Wait();

Three parts:

Three parts:

- AddJob : No synchronization necessary, job is added to a vector.

- Submit : Mutex synchonization, each worker threads add the JobList to their own local ringer buffer list of JobLists.

- Wait : Signal synchonization (delegated to OS). Let the Worker threads complete.

How a Worker works

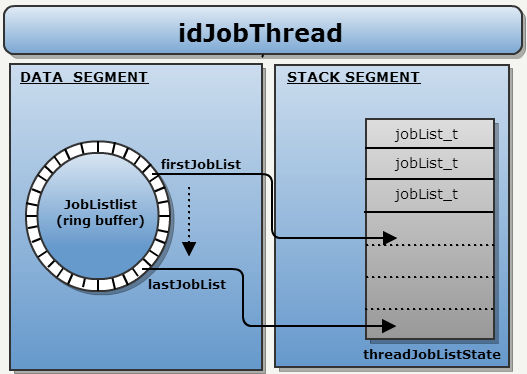

Workers are infinite loops. Each iteration the loop check if more JobList have been added to the ring buffer and if so copies the reference to the local stack.

Workers are infinite loops. Each iteration the loop check if more JobList have been added to the ring buffer and if so copies the reference to the local stack.

Local stack : The thread stack is used to store JobLists addresses as an anti-stalling mechanism. If a thread fails to "lock" a JobList, it falls in RUN_STALLED mode. This stall can be recovered from by navigating the local stack JobLists list in order to find an other jobList to visit . This way, "Yielding" is avoided.

The interesting part is that everything is is done with no Mutexes mechanisms: Only atomic operations are used.

Note : Avoiding Mutexes is pushed far: Sometimes they are no used even though they should have been for "correctness". Example: The copy from heap to stack uses lastJobList and firstJobList with no mutex. This mean that the copy can omit a JobList added concurrently on the ring buffer. It is wrong but it is ok: The worker will just stall and wait for a signal when the ring buffer operation is completed.

The infinite loop :

int idJobThread::Run() { threadJobListState_t threadJobListState[MAX_JOBLISTS]; while ( !IsTerminating() ) { int currentJobList = 0; // fetch any new job lists and add them to the local list in threadJobListState {} if ( lastStalledJobList < 0 ) // find the job list with the highest priority else // try to hide the stall with a job from a list that has equal or higher priority currentJobList = X; // try running one or more jobs from the current job list int result = threadJobListState[currentJobList].jobList->RunJobs( threadNum, threadJobListState[currentJobList], singleJob ); // Analyze how job running went if ( ( result & idParallelJobList_Threads::RUN_DONE ) != 0 ) { // done with this job list so remove it from the local list (threadJobListState[currentJobList]) } else if ( ( result & idParallelJobList_Threads::RUN_STALLED ) != 0 ) { lastStalledJobList = currentJobList; } else { lastStalledJobList = -1; } } }

Where jobs are run :

int idParallelJobList::RunJobs( unsigned int threadNum, threadJobListState_t & state, bool singleJob ) {

// try to lock to fetch a new job

if ( fetchLock.Increment() == 1 ) {

// grab a new job

state.nextJobIndex = currentJob.Increment() - 1;

// release the fetch lock

fetchLock.Decrement();

} else {

// release the fetch lock

fetchLock.Decrement();

// another thread is fetching right now so consider stalled

return ( result | RUN_STALLED );

}

// Run job

jobList[state.nextJobIndex].function( jobList[state.nextJobIndex].data );

// if at the end of the job list we're done

if ( state.nextJobIndex >= jobList.Num() ) {

return ( result | RUN_DONE );

}

return ( result | RUN_PROGRESS );

}

id Software Synchronization tools

id Software uses three types of synchronization mecanisms:

1. Monitors (idSysSignal) :

| Abstraction | Operations | Implemented with | Details |

| idSysSignal | Event Objects | ||

| Raise | SetEvent | Sets the specified event object to the signaled state. | |

| Clear | ResetEvent | Sets the specified event object to the nonsignaled state. | |

| Wait | WaitForSingleObject | Waits until the specified object is in the signaled state or the time-out interval elapses. |

Signals are mainly used to put a Thread to sleep. Workers uses idSysSignal.Wait() to remove themselves from the Operating System scheduler until jobs are available.

2. Mutexes (idSysMutex) :

| Abstraction | Operations | Implemented with | Details |

| idSysMutex | Critical Section Objects | ||

| Lock | EnterCriticalSection | Waits for ownership of the specified critical section object. The function returns when the calling thread is granted ownership. | |

| Unlock | LeaveCriticalSection | Releases ownership of the specified critical section object. | |

3. Atomic operations (idSysInterlockedInteger) :

| Abstraction | Operations | Implemented with | Details |

| idSysInterlockedInteger | Interlocked Variables | ||

| Increment | InterlockedIncrementAcquire | Increments (increases by one) the value of the specified 32-bit variable as an atomic operation. The operation is performed using acquire memory ordering semantics. | |

| Decrement | InterlockedDecrementRelease | Decrements (decreases by one) the value of the specified 32-bit variable as an atomic operation. The operation is performed with release memory ordering semantics. |